5 Chapter 5: Experimental and Quasi-Experimental Designs

CASE STUDY: The Impact of Teen Court

An Experimental Evaluation of Teen Courts1

Is teen court more effective at reducing recidivism and improving attitudes than traditional juvenile justice processing?

Researchers randomly assigned 168 juvenile offenders ages 11 to 17 from four different counties in Maryland to either teen court as experimental group members or to traditional juvenile justice processing as control group members. (Note: Discussion on the technical aspects of experimental designs, including random assignment, is found in detail later in this chapter.) Of the 168 offenders, 83 were assigned to teen court and 85 were assigned to regular juvenile justice processing through random assignment. Of the 83 offenders assigned to the teen court experimental group, only 56 (67%) agreed to participate in the study. Of the 85 youth randomly assigned to normal juvenile justice processing, only 51 (60%) agreed to participate in the study.

Upon assignment to teen court or regular juvenile justice processing, all offenders entered their respective sanction. Approximately four months later, offenders in both the experimental group (teen court) and the control group (regular juvenile justice processing) were asked to complete a post-test survey inquiring about a variety of behaviors (frequency of drug use, delinquent behavior, variety of drug use) and attitudinal measures (social skills, rebelliousness, neighborhood attachment, belief in conventional rules, and positive self-concept). The study researchers also collected official re-arrest data for 18 months starting at the time of offender referral to juvenile justice authorities.

Teen court participants self-reported higher levels of delinquency than those processed through regular juvenile justice processing. According to official re-arrests, teen court youth were re-arrested at a higher rate and incurred a higher average number of total arrests than the control group. Teen court offenders also reported significantly lower scores on survey items designed to measure their “belief in conventional rules” compared to offenders processed through regular juvenile justice avenues. Other attitudinal and opinion measures did not differ significantly between the experimental and control group members based on their post-test responses. In sum, those youth randomly assigned to teen court fared worse than control group members who were not randomly assigned to teen court.

Limitations with the Study Procedure

Limitations are inherent in any research study and those research efforts that utilize experimental designs are no exception. It is important to consider the potential impact that a limitation of the study procedure could have on the results of the study.

In the current study, one potential limitation is that teen courts from four different counties in Maryland were utilized. Because of the diversity in teen court sites, it is possible that there were differences in procedure between the four teen courts and such differences could have impacted the outcomes of this study. For example, perhaps staff members at one teen court were more punishment-oriented than staff members at the other county teen courts. This philosophical difference may have affected treatment delivery and hence experimental group members’ belief in conventional attitudes and recidivism. Although the researchers monitored each teen court to help ensure treatment consistency between study sites, it is possible that differences existed in the day-to-day operation of the teen courts that may have affected participant outcomes. This same limitation might also apply to control group members who were sanctioned with regular juvenile justice processing in four different counties.

A researcher must also consider the potential for differences between the experimental and control group members. Although the offenders were randomly assigned to the experimental or control group, and the assumption is that the groups were equivalent to each other prior to program participation, the researchers in this study were only able to compare the experimental and control groups on four variables: age, school grade, gender, and race. It is possible that the experimental and control group members differed by chance on one or more factors not measured or available to the researchers. For example, perhaps a large number of teen court members experienced problems at home that can explain their more dismal post-test results compared to control group members without such problems. A larger sample of juvenile offenders would likely have helped to minimize any differences between the experimental and control group members. The collection of additional information from study participants would have also allowed researchers to be more confident that the experimental and control group members were equivalent on key pieces of information that could have influenced recidivism and participant attitudes.

Finally, while 168 juvenile offenders were randomly assigned to either the experimental or control group, not all offenders agreed to participate in the evaluation. Remember that of the 83 offenders assigned to the teen court experimental group, only 56 (67%) agreed to participate in the study. Of the 85 youth randomly assigned to normal juvenile justice processing, only 51 (60%) agreed to participate in the study. While this limitation is unavoidable, it still could have influenced the study. Perhaps those 27 offenders who declined to participate in the teen court group differed significantly from the 56 who agreed to participate. If so, it is possible that the differences among those two groups could have impacted the results of the study. For example, perhaps the 27 youths who were randomly assigned to teen court but did not agree to be a part of the study were some of the least risky of potential teen court participants—less serious histories, better attitudes to begin with, and so on. In this case, perhaps the most risky teen court participants agreed to be a part of the study, and as a result of being more risky, this led to more dismal delinquency outcomes compared to the control group at the end of each respective program. Because parental consent was required for the study authors to be able to compare those who declined to participate in the study to those who agreed, it is unknown if the participants and nonparticipants differed significantly on any variables among either the experimental or control group. Moreover, of the resulting 107 offenders who took part in the study, only 75 offenders accurately completed the post-test survey measuring offending and attitudinal outcomes.

Again, despite the experimental nature of this study, such limitations could have impacted the study results and must be considered.

Teen courts are generally designed to deal with nonserious first time offenders before they escalate to more serious and chronic delinquency. Innovative programs such as “Scared Straight” and juvenile boot camps have inspired an increase in teen court programs across the country, although there is little evidence regarding their effectiveness compared to traditional sanctions for youthful offenders. This study provides more specific evidence as to the effectiveness of teen courts relative to normal juvenile justice processing. Researchers learned that teen court participants fared worse than those in the control group. The potential labeling effects of teen court, including stigma among peers, especially where the offense may have been very minor, may be more harmful than doing less or nothing. The real impact of this study lies in the recognition that teen courts and similar sanctions for minor offenders may do more harm than good.

One important impact of this study is that it utilized an experimental design to evaluate the effectiveness of a teen court compared to traditional juvenile justice processing. Despite the study’s limitations, by using an experimental design it improved upon previous teen court evaluations by attempting to ensure any results were in fact due to the treatment, not some difference between the experimental and control group. This study also utilized both official and self-report measures of delinquency, in addition to self-report measures on such factors as self-concept and belief in conventional rules, which have been generally absent from teen court evaluations. The study authors also attempted to gauge the comparability of the experimental and control groups on factors such as age, gender, and race to help make sure study outcomes were attributable to the program, not the participants.

In This Chapter You Will Learn

The four components of experimental and quasi-experimental research designs and their function in answering a research question

The differences between experimental and quasi-experimental designs

The importance of randomization in an experimental design

The types of questions that can be answered with an experimental or quasi-experimental research design

About the three factors required for a causal relationship

That a relationship between two or more variables may appear causal, but may in fact be spurious, or explained by another factor

That experimental designs are relatively rare in criminal justice and why

About common threats to internal validity or alternative explanations to what may appear to be a causal relationship between variables

Why experimental designs are superior to quasi-experimental designs for eliminating or reducing the potential of alternative explanations

Introduction

The teen court evaluation that began this chapter is an example of an experimental design. The researchers of the study wanted to determine whether teen court was more effective at reducing recidivism and improving attitudes compared to regular juvenile justice case processing. In short, the researchers were interested in the relationship between variables—the relationship of teen court to future delinquency and other outcomes. When researchers are interested in whether a program, policy, practice, treatment, or other intervention impacts some outcome, they often utilize a specific type of research method/design called experimental design. Although there are many types of experimental designs, the foundation for all of them is the classic experimental design. This research design, and some typical variations of this experimental design, are the focus of this chapter.

Although the classic experiment may be appropriate to answer a particular research question, there are barriers that may prevent researchers from using this or another type of experimental design. In these situations, researchers may turn to quasi-experimental designs. Quasi-experiments include a group of research designs that are missing a key element found in the classic experiment and other experimental designs (hence the term “quasi” experiment). Despite this missing part, quasi-experiments are similar in structure to experimental designs and are used to answer similar types of research questions. This chapter will also focus on quasi-experiments and how they are similar to and different from experimental designs.

Uncovering the relationship between variables, such as the impact of teen court on future delinquency, is important in criminal justice and criminology, just as it is in other scientific disciplines such as education, biology, and medicine. Indeed, whereas criminal justice researchers may be interested in whether a teen court reduces recidivism or improves attitudes, medical field researchers may be concerned with whether a new drug reduces cholesterol, or an education researcher may be focused on whether a new teaching style leads to greater academic gains. Across these disciplines and topics of interest, the experimental design is appropriate. In fact, experimental designs are used in all scientific disciplines; the only thing that changes is the topic. Specific to criminal justice, below is a brief sampling of the types of questions that can be addressed using an experimental design:

Does participation in a correctional boot camp reduce recidivism?

What is the impact of an in-cell integration policy on inmate-on-inmate assaults in prisons?

Does police officer presence in schools reduce bullying?

Do inmates who participate in faith-based programming while in prison have a lower recidivism rate upon their release from prison?

Do police sobriety checkpoints reduce drunken driving fatalities?

What is the impact of a no-smoking policy in prisons on inmate-on-inmate assaults?

Does participation in a domestic violence intervention program reduce repeat domestic violence arrests?

A focus on the classic experimental design will demonstrate the usefulness of this research design for addressing criminal justice questions interested in cause and effect relationships. Particular attention is paid to the classic experimental design because it serves as the foundation for all other experimental and quasi-experimental designs, some of which are covered in this chapter. As a result, a clear understanding of the components, organization, and logic of the classic experimental design will facilitate an understanding of other experimental and quasi-experimental designs examined in this chapter. It will also allow the reader to better understand the results produced from those various designs, and importantly, what those results mean. It is a truism that the results of a research study are only as “good” as the design or method used to produce them. Therefore, understanding the various experimental and quasi-experimental designs is the key to becoming an informed consumer of research.

The Challenge of Establishing Cause and Effect

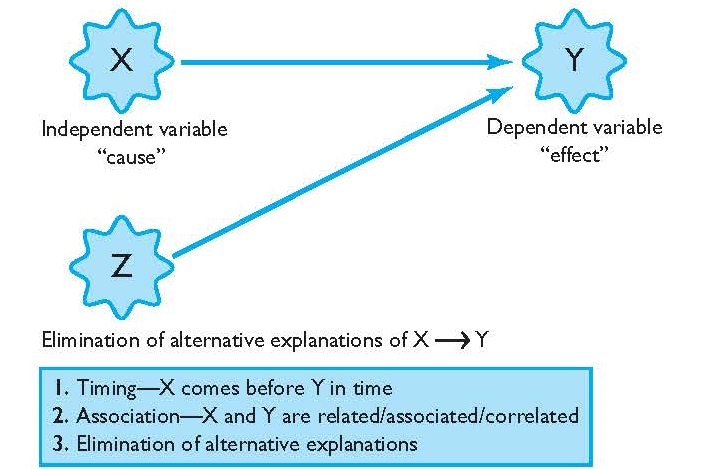

Researchers interested in explaining the relationship between variables, such as whether a treatment program impacts recidivism, are interested in causation or causal relationships. In a simple example, a causal relationship exists when X (independent variable) causes Y (dependent variable), and there are no other factors (Z) that can explain that relationship. For example, offenders who participated in a domestic violence intervention program (X–domestic violence intervention program) experienced fewer re-arrests (Y–re-arrests) than those who did not participate in the domestic violence program, and no other factor other than participation in the domestic violence program can explain these results. The classic experimental design is superior to other research designs in uncovering a causal relationship, if one exists. Before a causal relationship can be established, however, there are three conditions that must be met (see Figure 5.1).2

FIGURE 5.1 | The Cause and Effect Relationship

Timing The first condition for a causal relationship is timing. For a causal relationship to exist, it must be shown that the independent variable or cause (X) preceded the dependent variable or outcome (Y) in time. A decrease in domestic violence re-arrests (Y) cannot occur before participation in a domestic violence reduction program (X), if the domestic violence program is proposed to be the cause of fewer re-arrests. Ensuring that cause comes before effect is not sufficient to establish that a causal relationship exists, but it is one requirement that must be met for a causal relationship.

Association In addition to timing, there must also be an observable association between X and Y, the second necessary condition for a causal relationship. Association is also commonly referred to as covariance or correlation. When an association or correlation exits, this means there is some pattern of relationship between X and Y—as X changes by increasing or decreasing, Y also changes by increasing or decreasing. Here, the notion of X and Y increasing or decreasing can mean an actual increase/decrease in the quantity of some factor, such as an increase/decrease in the number of prison terms or days in a program or re-arrests. It can also refer to an increase/decrease in a particular category, for example, from nonparticipation in a program to participation in a program. For instance, subjects who participated in a domestic violence reduction program (X) incurred fewer domestic violence re-arrests (Y) than those who did not participate in the program. In this example, X and Y are associated—as X change s or increases from nonparticipation to participation in the domestic violence program, Y or the number of re-arrests for domestic violence decreases.

Associations between X and Y can occur in two different directions: positive or negative. A positive association means that as X increases, Y increases, or, as X decreases, Y decreases. A negative association means that as X increases, Y decreases, or, as X decreases, Y increases. In the example above, the association is negative—participation in the domestic violence program was associated with a reduction in re-arrests. This is also sometimes called an inverse relationship.

Elimination of Alternative Explanations Although participation in a domestic violence program may be associated with a reduction in re-arrests, this does not mean for certain that participation in the program was the cause of reduced re-arrests. Just as timing by itself does not imply a causal relationship, association by itself does not imply a causal relationship. For example, instead of the program being the cause of a reduction in re-arrests, perhaps several of the program participants died shortly after completion of the domestic violence program and thus were not able to engage in domestic violence (and their deaths were unknown to the researcher tracking re-arrests). Perhaps a number of the program participants moved out of state and domestic violence re-arrests occurred but were not able to be uncovered by the researcher. Perhaps those in the domestic violence program experienced some other event, such as the trauma of a natural disaster, and that experience led to a reduction in domestic violence, an event not connected to the domestic violence program. If any of these situations occurred, it might appear that the domestic violence program led to fewer re-arrests. However, the observed reduction in re-arrests can actually be attributed to a factor unrelated to the domestic violence program.

The previous discussion leads to the third and final necessary consideration in determining a causal relationship—elimination of alternative explanations. This means that the researcher must rule out any other potential explanation of the results, except for the experimental condition such as a program, policy, or practice. Accounting for or ruling out alternative explanations is much more difficult than ensuring timing and association. Ruling out all alternative explanations is difficult because there are so many potential other explanations that can wholly or partly explain the findings of a research study. This is especially true in the social sciences, where researchers are often interested in relationships explaining human behavior. Because of this difficulty, associations by themselves are sometimes mistaken as causal relationships when in fact they are spurious. A spurious relationship is one where it appears that X and Y are causally related, but the relationship is actually explained by something other than the independent variable, or X.

One only needs to go so far as the daily newspaper to find headlines and stories of mere associations being mistaken, assumed, or represented as causal relationships. For example, a newspaper headline recently proclaimed “Churchgoers live longer.”3 An uninformed consumer may interpret this headline as evidence of a causal relationship—that going to church by itself will lead to a longer life—but the astute consumer would note possible alternative explanations. For example, people who go to church may live longer because they tend to live healthier lifestyles and tend to avoid risky situations. These are two probable alternative explanations to the relationship independent of simply going to church. In another example, researchers David Kalist and Daniel Yee explored the relationship between first names and delinquent behavior in their manuscript titled “First Names and Crime: Does Unpopularity Spell Trouble?”4 Kalist and Lee (2009) found that unpopular names are associated with juvenile delinquency. In other words, those individuals with the most unpopular names were more likely to be delinquent than those with more popular names. According to the authors, is it not necessarily someone’s name that leads to delinquent behavior, but rather, the most unpopular names also tend to be correlated with individuals who come from disadvantaged home environments and experience a low socio-economic status of living. Rightly noted by the authors, these alternative explanations help to explain the link between someone’s name and delinquent behavior—a link that is not causal.

A frequently cited example provides more insight to the claim that an association by itself is not sufficient to prove causality. In certain cities in the United States, for example, as ice cream sales increase on a particular day or in a particular month so does the incidence of certain forms of crime. If this association were represented as a causal statement, it would be that ice cream or ice cream sales causes crime. There is an association, no doubt, and let us assume that ice cream sales rose before the increase in crime (timing). Surely, however, this relationship between ice cream sales and crime is spurious. The alternative explanation is that ice cream sales and crime are associated in certain parts of the country because of the weather. Ice cream sales tend to increase in warmer temperatures, and it just so happens that certain forms of crime tend to increase in warmer temperatures as well. This coincidence or association does not mean a causal relationship exists. Additionally, this does not mean that warm temperatures cause crime either. There are plenty of other alternative explanations for the increase in certain forms of crime and warmer temperatures.6 For another example of a study subject to alternative explanations, read the June 2011 news article titled “Less Crime in U.S. Thanks to Videogames.”7 Based on your reading, what are some other potential explanations for the crime drop other than videogames?

The preceding examples demonstrate how timing and association can be present, but the final needed condition for a causal relationship is that all alternative explanations are ruled out. While this task is difficult, the classic experimental design helps to ensure these additional explanatory factors are minimized. When other designs are used, such as quasi-experimental designs, the chance that alternative explanations emerge is greater. This potential should become clearer as we explore the organization and logic of the classic experimental design.

CLASSICS IN CJ RESEARCH

Minneapolis Domestic Violence Experiment

Research Study

The Minneapolis Domestic Violence Experiment (MDVE)5

Research Question

Which police action (arrest, separation, or mediation) is most effective at deterring future misdemeanor domestic violence?

Methodology

The experiment began on March 17, 1981, and continued until August 1, 1982. The experiment was conducted in two of Minneapolis’s four police precincts—the two with the highest number of domestic violence reports and arrests. A total of 314 reports of misdemeanor domestic violence were handled by the police during this time frame.

This study utilized an experimental design with the random assignment of police actions. Each police officer involved in the study was given a pad of report forms. Upon a misdemeanor domestic violence call, the officer’s action (arrest, separation, or mediation) was predetermined by the order and color of report forms in the officer’s notebook. Colored report forms were randomly ordered in the officer’s notebook and the color on the form determined the officer response once at the scene. For example, after receiving a call for domestic violence, an officer would turn to his or her report pad to determine the action. If the top form was pink, the action was arrest. If on the next call the top form was a different color, an action other than arrest would occur. All colored report forms were randomly ordered through a lottery assignment method. The result is that all police officer actions to misdemeanor domestic violence calls were randomly assigned. To ensure the lottery procedure was properly carried out, research staff participated in ride-alongs with officers to ensure that officers did not skip the order of randomly ordered forms. Research staff also made sure the reports were received in the order they were randomly assigned in the pad of report forms.

To examine the relationship of different officer responses to future domestic violence, the researchers examined official arrests of the suspects in a 6-month follow-up period. For example, the researchers examined those initially arrested for misdemeanor domestic violence and how many were subsequently arrested for domestic violence within a 6-month time frame. They did the same procedure for the police actions of separation and mediation. The researchers also interviewed the victim(s) of each incident and asked if a repeat domestic violence incident occurred with the same suspect in the 6-month follow-up period. This allowed researchers to examine domestic violence offenses that may have occurred but did not come to the official attention of police. The researchers then compared official arrests for domestic violence to self-reported domestic violence after the experiment.

Results

Suspects arrested for misdemeanor domestic violence, as opposed to situations where separation or mediation was used, were significantly less likely to engage in repeat domestic violence as measured by official arrest records and victim interviews during the 6-month follow-up period. According to official police records, 10% of those initially arrested engaged in repeat domestic violence in the followup period, 19% of those who initially received mediation engaged in repeat domestic violence, and 24% of those who randomly received separation engaged in repeat domestic violence. According to victim interviews, 19% of those initially arrested engaged in repeat domestic violence, compared to 37% for separation and 33% for mediation. The general conclusion of the experiment was that arrest was preferable to separation or mediation in deterring repeat domestic violence across both official police records and victim interviews.

Limitations with the Study Procedure

A few issues that affected the random assignment procedure occurred throughout the study. First, some officers did not follow the randomly assigned action (arrest, separation, or mediation) as a result of other circumstances that occurred at the scene. For example, if the randomly assigned action was separation, but the suspect assaulted the police officer during the call, the officer might arrest the suspect. Second, some officers simply ignored the assigned action if they felt a particular call for domestic violence required another action. For example, if the action was mediation as indicated by the randomly assigned report form, but the officer felt the suspect should be arrested, he or she may have simply ignored the randomly assigned response and substituted his or her own. Third, some officers forgot their report pads and did not know the randomly assigned course of action to take upon a call of domestic violence. Fourth and finally, the police chief also allowed officers to deviate from the randomly assigned action in certain circumstances. In all of these situations, the random assignment procedures broke down.

Impact on Criminal Justice

The results of the MDVE had a rapid and widespread impact on law enforcement practice throughout the United States. Just two years after the release of the study, a 1986 telephone survey of 176 urban police departments serving cities with populations of 100,000 or more found that 46 percent of the departments preferred to make arrests in cases of minor domestic violence, largely due to the effectiveness of this practice in the Minneapolis Domestic Violence Experiment.8

In an attempt to replicate the findings of the Minneapolis Domestic Violence Experiment, the National Institute of Justice sponsored the Spouse Assault Replication Program. Replication studies were conducted in Omaha, Charlotte, Milwaukee, Miami, and Colorado Springs from 1986–1991. In three of the five replications, offenders randomly assigned to the arrest group had higher levels of continued domestic violence in comparison to other police actions during domestic violence situations.9 Therefore, rather than providing results that were consistent with the Minneapolis Domestic Violence Experiment, the results from the five replication experiments produced inconsistent findings about whether arrest deters domestic violence.10

Despite the findings of the replications, the push to arrest domestic violence offenders has continued in law enforcement. Today many police departments require officers to make arrests in domestic violence situations. In agencies that do not mandate arrest, department policy typically states a strong preference toward arrest. State legislatures have also enacted laws impacting police actions regarding domestic violence. Twenty-one states have mandatory arrest laws while eight have pro-arrest statutes for domestic violence.11

The Classic Experimental Design

Table 5.1 provides an illustration of the classic experimental design.12 It is important to become familiar with the specific notation and organization of the classic experiment before a full discussion of its components and their purpose.

Major Components of the Classic Experimental Design

The classic experimental design has four major components:

1. Treatment

2. Experimental Group and Control Group

3. Pre-Test and Post-Test

4. Random Assignment

Treatment The first component of the classic experimental design is the treatment, and it is denoted by X in the classic experimental design. The treatment can be a number of things—a program, a new drug, or the implementation of a new policy. In a classic experimental design, the primary goal is to determine what effect, if any, a particular treatment had on some outcome. In this way, the treatment can also be considered the independent variable.

TABLE 5.1 | The Classic Experimental Design

|

Experimental Group |

R |

O1 |

X |

O2 |

|

Control Group |

R |

O1 |

|

O2 |

|

|

|

Pre-Test |

|

Post-Test |

Experimental Group = Group that receives the treatment

Control Group = Group that does not receive the treatment

R = Random assignment

O1 = Observation before the treatment, or the pre-test

X = Treatment or the independent variable

O2 = Observation after the treatment, or the post-test

Experimental and Control Groups The second component of the classic experiment is an experimental group and a control group. The experimental group receives the treatment, and the control group does not receive the treatment. There will always be at least one group that receives the treatment in experimental and quasi-experimental designs. In some cases, experiments may have multiple experimental groups receiving multiple treatments.

Pre-Test and Post-Test The third component of the classic experiment is a pre-test and a post-test. A pretest is a measure of the dependent variable or outcome before the treatment. The post-test is a measure of the dependent variable after the treatment is administered. It is important to note that the post-test is defined based on the stated goals of the program. For example, if the stated goal of a particular program is to reduce re-arrests, the post-test will be a measure of re-arrests after the program. The dependent variable also defines the pre-test. For example, if a researcher wanted to examine the impact of a domestic violence reduction program (treatment or X) on the goal of reducing re-arrests (dependent variable or Y), the pre-test would be the number of domestic violence arrests incurred before the program. Program goals may be numerous and all can constitute a post-test, and hence, the pre-test. For example, perhaps the goal of the domestic violence program is also that participants learn of different pro-social ways to handle domestic conflicts other than resorting to violence. If researchers wanted to examine this goal, the post-test might be subjects’ level of knowledge about pro-social ways to handle domestic conflicts other than violence. The pre-test would then be subjects’ level of knowledge about these pro-social alternatives to violence before they received the treatment program.

Although all designs have a post-test, it is not always the case that designs have a pre-test. This is because researchers may not have access or be able to collect information constituting the pre-test. For example, researchers may not be able to determine subjects’ level of knowledge about alternatives to domestic violence before the intervention program if the subjects are already enrolled in the domestic violence intervention program. In other cases, there may be financial barriers to collecting pre-test information. In the teen court evaluation that started this chapter, for example, researchers were not able to collect pre-test information on study participants due to the financial strain it would have placed on the agencies involved in the study.13 There are a number of potential reasons why a pre-test might not be available in a research study. The defining feature, however, is that the pre-test is determined by the post-test.

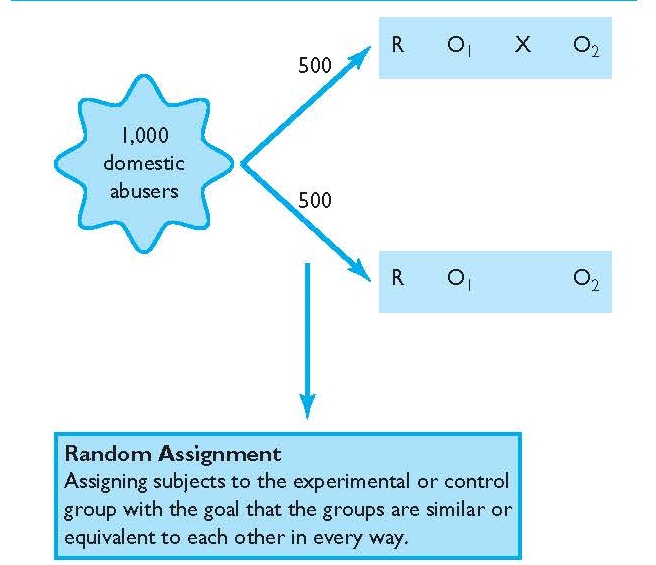

Random Assignment The fourth component of the classic experiment is random assignment. Random assignment refers to a process whereby members of the experimental group and control group are assigned to the two groups through a random and unbiased process. Random assignment should not be mistaken for random selection as discussed in Chapter 3. Random selection refers to selecting a smaller but representative sample from a larger population. For example, a researcher may randomly select a sample from a larger city population for the purposes of sending sample members a mail survey to determine their attitudes on crime. The goal of random selection in this example is to make sure the sample, although smaller in size than the population, accurately represents the larger population.

Random assignment, on the other hand, refers to the process of assigning subjects to either the experimental or control group with the goal that the groups are similar or equivalent to each other in every way (see Figure 5.2). The exception to this rule is that one group gets the treatment and the other does not (see discussion below on why equivalence is so important). Although the concept of random is similar in each, the goals are different between random selection and random assignment.14 Experimental designs all feature random assignment, but this is not true of other research designs, in particular quasi-experimental designs.

FIGURE 5.2 | Random Assignment

The classic experimental design is the foundation for all other experimental and quasi-experimental designs because it retains all of the major components discussed above. As mentioned, sometimes designs do not have a pre-test, a control group, or random assignment. Because the pre-test, control group, and random assignment are so critical to the goal of uncovering a causal relationship, if one exists, we explore them further below.

The Logic of the Classic Experimental Design

Consider a research study using the classic experimental design where the goal is to determine if a domestic violence treatment program has any effect on re-arrests for domestic violence. The randomly assigned experimental and control groups are comprised of persons who had previously been arrested for domestic violence. The pretest is a measure of the number of domestic violence arrests before the program. This is because the goal of the program is to determine whether re-arrests are impacted after the treatment. The post-test is the number of re-arrests following the treatment program.

Once randomly assigned, the experimental group members receive the domestic violence program, and the control group members do not. After the program, the researcher will compare the pre-test arrests for domestic violence of the experimental group to post-test arrests for domestic violence to determine if arrests increased, decreased, or remained constant since the start of the program. The researcher will also compare the post-test re-arrests for domestic violence between the experimental and control groups. With this example, we explore the usefulness of the classic experimental design, and the contribution of the pre-test, random assignment, and the control group to the goal of determining whether a domestic violence program reduces re-arrests.

The Pre-Test As a component of the classic experiment, the pre-test allows an examination of change in the dependent variable from before the domestic violence program to after the domestic violence program. In short, a pre-test allows the researcher to determine if re-arrests increased, decreased, or remained the same following the domestic violence program. Without a pre-test, researchers would not be able to determine the extent of change, if any, from before to after the program for either the experimental or control group.

Although the pre-test is a measure of the dependent variable before the treatment, it can also be thought of as a measure whereby the researcher can compare the experimental group to the control group before the treatment is administered. For example, the pre-test helps researchers to make sure both groups are similar or equivalent on previous arrests for domestic violence. The importance of equivalence between the experimental and control groups on previous arrests is discussed below with random assignment.

Random Assignment Random assignment helps to ensure that the experimental and control groups are equivalent before the introduction of the treatment. This is perhaps one of the most critical aspects of the classic experiment and all experimental designs. Although the experimental and control groups will be made up of different people with different characteristics, assigning them to groups via a random assignment process helps to ensure that any differences or bias between the groups is eliminated or minimized. By minimizing bias, we mean that the groups will balance each other out on all factors except the treatment. If they are balanced out on all factors prior to the administration of the treatment, any differences between the groups at the post-test must be due to the treatment—the only factor that differs between the experimental group and the control group. According to Shadish, Cook, and Campbell: “If implemented correctly, random assignment creates two or more groups of units that are probabilistically similar to each other on the average. Hence, any outcome differences that are observed between those groups at the end of a study are likely to be due to treatment, not to differences between the groups that already existed at the start of the study.”15 Considered in another way, if the experimental and control group differed significantly on any relevant factor other than the treatment, the researcher would not know if the results observed at the post-test are attributable to the treatment or to the differences between the groups.

Consider an example where 500 domestic abusers were randomly assigned to the experimental group and 500 were randomly assigned to the control group. Because they were randomly assigned, we would likely find more frequent domestic violence arrestees in both groups, older and younger arrestees in both groups, and so on. If random assignment was implemented correctly, it would be highly unlikely that all of the experimental group members were the most serious or frequent arrestees and all of the control group members were less serious and/or less frequent arrestees. While there are no guarantees, we know the chance of this happening is extremely small with random assignment because it is based on known probability theory. Thus, except for a chance occurrence, random assignment will result in equivalence between the experimental and control group in much the same way that flipping a coin multiple times will result in heads approximately 50% of the time and tails approximately 50% of the time. Over 1,000 tosses of a coin, for example, should result in roughly 500 heads and 500 tails. While there is a chance that flipping a coin 1,000 times will result in heads 1,000 times, or some other major imbalance between heads and tails, this potential is small and would only occur by chance.

The same logic from above also applies with randomly assigning people to groups, and this can even be done by flipping a coin. By assigning people to groups through a random and unbiased process, like flipping a coin, only by chance (or researcher error) will one group have more of one characteristic than another, on average. If there are no major (also called statistically significant) differences between the experimental and control group before the treatment, the most plausible explanation for the results at the post-test is the treatment.

As mentioned, it is possible by some chance occurrence that the experimental and control group members are significantly different on some characteristic prior to administration of the treatment. To confirm that the groups are in fact similar after they have been randomly assigned, the researcher can examine the pre-test if one is present. If the researcher has additional information on subjects before the treatment is administered, such as age, or any other factor that might influence post-test results at the end of the study, he or she can also compare the experimental and control group on those measures to confirm that the groups are equivalent. Thus, a researcher can confirm that the experimental and control groups are equivalent on information known to the researcher.

Being able to compare the groups on known measures is an important way to ensure the random assignment process “worked.” However, perhaps most important is that randomization also helps to ensure similarity across unknown variables between the experimental and control group. Because random assignment is based on known probability theory, there is a much higher probability that all potential differences between the groups that could impact the post-test should balance out with random assignment—known or unknown. Without random assignment, it is likely that the experimental and control group would differ on important but unknown factors and such differences could emerge as alternative explanations for the results. For example, if a researcher did not utilize random assignment and instead took the first 500 domestic abusers from an ordered list and assigned them to the experimental group and the last 500 domestic abusers and assigned them to the control group, one of the groups could be “lopsided” or imbalanced on some important characteristic that could impact the outcome of the study. With random assignment, there is a much higher likelihood that these important characteristics among the experimental and control groups will balance out because no individual has a different chance of being placed into one group versus the other. The probability of one or more characteristics being concentrated into one group and not the other is extremely small with random assignment.

To further illustrate the importance of random assignment to group equivalence, suppose the first 500 domestic violence abusers who were assigned to the experimental group from the ordered list had significantly fewer domestic violence arrests before the program than the last 500 domestic violence abusers on the list. Perhaps this is because the ordered list was organized from least to most chronic domestic abusers. In this instance, the control group would be lopsided concerning number of pre-program domestic violence arrests—they would be more chronic than the experimental group. The arrest imbalance then could potentially explain the post-test results following the domestic violence program. For example, the “less risky” offenders in the experimental group might be less likely to be re-arrested regardless of their participation in the domestic violence program, especially compared to the more chronic domestic abusers in the control group. Because of imbalances between the experimental and control group on arrests before the program was implemented, it would not be known for certain whether an observed reduction in re-arrests after the program for the experimental group was due to the program or the natural result of having less risky offenders in the experimental group. In this instance, the results might be taken to suggest that the program significantly reduces re-arrests. This conclusion might be spurious, however, for the association may simply be due to the fact that the offenders in the experimental group were much different (less frequent offenders) than the control group. Here, the program may have had no effect—the experimental group members may have performed the same regardless of the treatment because they were low-level offenders.

The example above suggests that differences between the experimental and control groups based on previous arrest records could have a major impact on the results of a study. Such differences can arise with the lack of random assignment. If subjects were randomly assigned to the experimental and control group, however, there would be a much higher probability that less frequent and more frequent domestic violence arrestees would have been found in both the experimental and control groups and the differences would have balanced out between the groups—leaving any differences between the groups at the post-test attributable to the treatment only.

In summary, random assignment helps to ensure that the experimental and control group members are balanced or equivalent on all factors that could impact the dependent variable or post-test—known or unknown. The only factor they are not balanced or equal on is the treatment. As such, random assignment helps to isolate the impact of the treatment, if any, on the post-test because it increases confidence that the only difference between the groups should be that one group gets the treatment and the other does not. If that is the only difference between the groups, any change in the dependent variable between the experimental and control group must be attributed to the treatment and not an alternative explanation, such as significant arrest history imbalance between the groups (refer to Figure 5.2). This logic also suggests that if the experimental group and control group are imbalanced on any factor that may be relevant to the outcome, that factor then becomes a potential alternative explanation for the results—an explanation that reduces the researcher’s ability to isolate the real impact of the treatment.

WHAT RESEARCH SHOWS: IMPACTING CRIMINAL JUSTICE OPERATIONS

Scared Straight

The 1978 documentary Scared Straight introduced to the public the “Lifer’s Program” at Rahway State Prison in New Jersey. This program sought to decrease juvenile delinquency by bringing at-risk and delinquent juveniles into the prison where they would be “scared straight” by inmates serving life sentences. Participants in the program were talked to and yelled at by the inmates in an effort to scare them. It was believed that the fear felt by the participants would lead to a discontinuation of their problematic behavior so that they would not end up in prison themselves. Although originally touted as a success based on anecdotal evidence, subsequent evaluations of the program and others like it proved otherwise.

Using a classic experimental design, Finckenauer evaluated the original “Lifer’s Program” at Rahway State Prison.16 Participating juveniles were randomly assigned to the experimental group or the control group. Results of the evaluation were not positive. Post-test measures revealed that juveniles who were assigned to the experimental group and participated in the program were actually more seriously delinquent afterwards than those who did not participate in the program. Also using an experimental design with random assignment, Yarborough evaluated the “Juvenile Offenders Learn Truth” (JOLT) program at the State Prison of Southern Michigan at Jackson.17 This program was similar to that of the “Lifer’s Program” only with fewer obscenities used by the inmates. Post-test measurements were taken at two intervals, 3 and 6 months after program completion. Again, results were not positive. Findings revealed no significant differences between those juveniles who attended the program and those who did not.

Other experiments conducted on Scared Straight-like programs further revealed their inability to deter juveniles from future criminality.18 Despite the intuitive popularity of these programs, these evaluations proved that such programs were not successful. In fact, it is postulated that these programs may have actually done more harm than good.

The Control Group The presence of an equivalent control group (created through random assignment) also gives the researcher more confidence that the findings at the post-test are due to the treatment and not some other alternative explanation. This logic is perhaps best demonstrated by considering how interpretation of results is affected without a control group. Absent an equivalent control group, it cannot be known whether the results of the study are due to the program or some other factor. This is because the control group provides a baseline of comparison or a “control.” For example, without a control group, the researcher may find that domestic violence arrests declined from pre-test to post-test. But the researcher would not be able to definitely attribute that finding to the program without a control group. Perhaps the single experimental group incurred fewer arrests because they matured over their time in the program, regardless of participation in the domestic violence program. Having a randomly assigned control group would allow this consideration to be eliminated, because the equivalent control group would also have naturally matured if that was the case.

Because the control group is meant to be similar to the experimental group on all factors with the exception that the experimental group receives the treatment, the logic is that any differences between the experimental and control group after the treatment must then be attributable only to the treatment itself—everything else occurs equally in both the experimental and control groups and thus cannot be the cause of results. The bottom line is that a control group allows the researcher more confidence to attribute any change in the dependent variable from pre- to post-test and between the experimental and control groups to the treatment—and not another alternative explanation. Absent a control group, the researcher would have much less confidence in the results.

Knowledge about the major components of the classic experimental design and how they contribute to an understanding of cause and effect serves as an important foundation for studying different types of experimental and quasi-experimental designs and their organization. A useful way to become familiar with the components of the experimental design and their important role is to consider the impact on the interpretation of results when one or more components are lacking. For example, what if a design lacked a pre-test? How could this impact the interpretation of post-test results and knowledge about the comparability of the experimental and control group? What if a design lacked random assignment? What are some potential problems that could occur and how could those potential problems impact interpretation of results? What if a design lacked a control group? How does the absence of an equivalent control group affect a researcher’s ability to determine the unique effects of the treatment on the outcomes being measured? The ability to discuss the contribution of a pre-test, random assignment, and a control group—and what is the impact when one or more of those components is absent from a research design—is the key to understanding both experimental and quasi-experimental designs that will be discussed in the remainder of this chapter. As designs lose these important parts and transform from a classic experiment to another experimental design or to a quasi-experiment, they become less useful in isolating the impact that a treatment has on the dependent variable and allow more room for alternative explanations of the results.

One more important point must be made before further delving into experimental and quasi-experimental designs. This point is that rarely, if ever, will the average consumer of research be exposed to the symbols or specific language of the classic experiment, or other experimental and quasi-experimental designs examined in this chapter. In fact, it is unlikely that the average consumer will ever be exposed to the terms pre-test, post-test, experimental group, or random assignment in the popular media, among other terms related to experimental and quasi-experimental designs. Yet, consumers are exposed to research results produced from these and other research designs every day. For example, if a national news organization or your regional newspaper reported a story about the effectiveness of a new drug to reduce cholesterol or the effects of different diets on weight loss, it is doubtful that the results would be reported as produced through a classic experimental design that used a control group and random assignment. Rather, these media outlets would use generally nonscientific terminology such as “results of an experiment showed” or “results of a scientific experiment indicated” or “results showed that subjects who received the new drug had greater cholesterol reductions than those who did not receive the new drug.” Even students who regularly search and read academic articles for use in course papers and other projects will rarely come across such design notation in the research studies they utilize. Depiction of the classic experimental design, including a discussion of its components and their function, simply illustrates the organization and notation of the classic experimental design. Unfortunately, the average consumer has to read between the lines to determine what type of design was used to produce the reported results. Understanding the key components of the classic experimental design allows educated consumers of research to read between those lines.

RESEARCH IN THE NEWS

“Swearing Makes Pain More Tolerable”19

In 2009, Richard Stephens, John Atkins, and Andrew Kingston of the School of Psychology at Keele University conducted a study with 67 undergraduate students to determine if swearing affects an individual’s response to pain. Researchers asked participants to immerse their hand in a container filled with ice-cold water and repeat a preferred swear word. The researchers then asked the same participants to immerse their hand in ice-cold water while repeating a word used to describe a table (a non-swear word). The results showed that swearing increased pain tolerance compared to the non-swearing condition. Participants who used a swear word were able to hold their hand in ice-cold water longer than when they did not swear. Swearing also decreased participants’ perception of pain.

1. This study is an example of a repeated measures design. In this form of experimental design, study participants are exposed to an experimental condition (swearing with hand in ice-cold water) and a control condition (non-swearing with hand in ice-cold water) while repeated outcome measures are taken with each condition, for example, the length of time a participant was able to keep his or her hand submerged in ice-cold water. Conduct an Internet search for “repeated measures design” and explore the various ways such a study could be conducted, including the potential benefits and drawbacks to this design.

2. After researching repeated measures designs, devise a hypothetical repeated measures study of your own.

3. Retrieve and read the full research study “Swearing as a Response to Pain” by Stephens, Atkins, and Kingston while paying attention to the design and methods (full citation information for this study is listed below). Has your opinion of the study results changed after reading the full study? Why or why not?

Full Study Source: Stephens, R., Atkins, J., and Kingston, A. (2009). “Swearing as a response to pain.” NeuroReport 20, 1056–1060.

Variations on the Experimental Design

The classic experimental design is the foundation upon which all experimental and quasi-experimental designs are based. As such, it can be modified in numerous ways to fit the goals (or constraints) of a particular research study. Below are two variations of the experimental design. Again, knowledge about the major components of the classic experiment, how they contribute to an explanation of results, and what the impact is when one or more components are missing provides an understanding of all other experimental designs.

Post-Test Only Experimental Design

The post-test only experimental design could be used to examine the impact of a treatment program on school disciplinary infractions as measured or operationalized by referrals to the principal’s office (see Table 5.2). In this design, the researcher randomly assigns a group of discipline problem students to the experimental group and control group by flipping a coin—heads to the experimental group and tails to the control group. The experimental group then enters the 3-month treatment program. After the program, the researcher compares the number of referrals to the principal’s office between the experimental and control groups over some period of time, for example, discipline referrals at 6 months after the program. The researcher finds that the experimental group has a much lower number of referrals to the principal’s office in the 6 month follow-up period than the control group.

TABLE 5.2 | Post-Test Only Experimental Design

|

Experimental Group |

R |

X |

O |

|

Control Group |

R |

|

O |

|

|

|

|

Post-Test |

Several issues arise in this example study. The researcher would not know if discipline problems decreased, increased, or stayed the same from before to after the treatment program because the researcher did not have a count of disciplinary referrals prior to the treatment program (e.g., a pre-test). Although the groups were randomly assigned and are presumed equivalent, the absence of a pre-test means the researcher cannot confirm that the experimental and control groups were equivalent before the treatment was administered, particularly on the number of referrals to the principal’s office. The groups could have differed by a chance occurrence even with random assignment, and any such differences between the groups could potentially explain the post-test difference in the number of referrals to the principal’s office. For example, if the control group included much more serious or frequent discipline problem students than the experimental group by chance, this difference might explain the lower number of referrals for the experimental group, not that the treatment produced this result.

Experimental Design with Two Treatments and a Control Group

This design could be used to determine the impact of boot camp versus juvenile detention on post-release recidivism (see Table 5.3). Recidivism in this study is operationalized as re-arrest for delinquent behavior. First, a population of known juvenile delinquents is randomly assigned to either boot camp, juvenile detention, or a control condition where they receive no sanction. To accomplish random assignment to groups, the researcher places the names of all youth into a hat and assigns the groups in order. For example, the first name pulled goes into experimental group 1, the next into experimental group 2, and the next into the control group, and so on. Once randomly assigned, the experimental group youth receive either boot camp or juvenile detention for a period of 3 months, whereas members of the control group are released on their own recognizance to their parents. At the end of the experiment, the researcher compares the re-arrest activity of boot camp participants to detention delinquents to control group members during a 6-month follow-up period.

TABLE 5.3 | Experimental Design with Two Treatments and a Control Group

|

Experimental Group |

R |

O1 |

X1 |

O2 |

|

Experimental Group |

R |

O1 |

X2 |

O2 |

|

Control Group |

R |

O1 |

|

O2 |

|

|

|

Pre-Test |

|

Post-Test |

This design has several advantages. First, it includes all major components of the classic experimental design, and simply adds an additional treatment for comparison purposes. Random assignment was utilized and this means that the groups have a higher probability of being equivalent on all factors that could impact the post-test. Thus, random assignment in this example helps to ensure the only differences between the groups are the treatment conditions. Without random assignment, there is a greater chance that one group of youth was somehow different, and this difference could impact the post-test. For example, if the boot camp youth were much less serious and frequent delinquents than the juvenile detention youth or control group youth, the results might erroneously show that the boot camp reduced recidivism when in fact the youth in boot camp may have been the “best risks”—unlikely to get re-arrested with or without boot camp. The pre-test in the example above allows the researcher to determine change in re-arrests from pretest to post-test. Thus, the researcher can determine if delinquent behavior, as measured by re-arrest, increased, decreased, or remained constant from pre- to post-test. The pre-test also allows the researcher to confirm that the random assignment process resulted in equivalent groups based on the pre-test. Finally, the presence of a control group allows the researcher to have more confidence that any differences in the post-test are due to the treatment. For example, if the control group had more re-arrests than the boot camp or juvenile detention experimental groups 6 months after their release from those programs, the researcher would have more confidence that the programs produced fewer re-arrests because the control group members were the same as the experimental groups; the only difference was that they did not receive a treatment.

The one key feature of experimental designs is that they all retain random assignment. This is why they are considered “experimental” designs. Sometimes, however, experimental designs lack a pre-test. Knowledge of the usefulness of a pre-test demonstrates the potential problems with those designs where it is missing. For example, in the post-test only experimental design, a researcher would not be able to make a determination of change in the dependent variable from pre- to post-test. Perhaps most importantly, the researcher would not be able to confirm that the experimental and control groups were in fact equivalent on a pre-test measure before the introduction of the treatment. Even though both groups were randomly assigned, and probability theory suggests they should be equivalent, without a pre-test measure the researcher could not confirm similarity because differences could occur by chance even with random assignment. If there were any differences at the post-test between the experimental group and control group, the results might be due to some explanation other than the treatment, namely that the groups differed prior to the administration of the treatment. The same limitation could apply in any form of experimental design that does not utilize a pre-test for conformational purposes.

Understanding the contribution of a pre-test to an experimental design shows that it is a critical component. It provides a measure of change and also gives the researcher more confidence that the observed results are due to the treatment, and not some difference between the experimental and control groups. Despite the usefulness of a pre-test, however, perhaps the most critical ingredient of any experimental design is random assignment. It is important to note that all experimental designs retain random assignment.

Experimental Designs Are Rare in Criminal Justice and Criminology

The classic experiment is the foundation for other types of experimental and quasi-experimental designs. The unfortunate reality, however, is that the classic experiment, or other experimental designs, are few and far between in criminal justice.20 Recall that one of the major components of an experimental design is random assignment. Achieving random assignment is often a barrier to experimental research in criminal justice. Achieving random assignment might, for example, require the approval of the chief (or city council or both) of a major metropolitan police agency to allow researchers to randomly assign patrol officers to certain areas of a city and/or randomly assign police officer actions. Recall the MDVE. This experiment required the full cooperation of the chief of police and other decision-makers to allow researchers to randomly assign police actions. In another example, achieving random assignment might require a judge to randomly assign a group of youthful offenders to a certain juvenile court sanction (experimental group), and another group of similar youthful offenders to no sanction or an alternative sanction as a control group.21 In sum, random assignment typically requires the cooperation of a number of individuals and sometimes that cooperation is difficult to obtain.

Even when random assignment can be accomplished, sometimes it is not implemented correctly and the random assignment procedure breaks down. This is another barrier to conducting experimental research. For example, in the MDVE, researchers randomly assigned officer responses, but the officers did not always follow the assigned course of action. Moreover, some believe that the random assignment of criminal justice programs, sentences, or randomly assigning officer responses may be unethical in certain circumstances, and even a violation of the rights of citizens. For example, some believe it is unfair when random assignment results in some delinquents being sentenced to boot camp while others get assigned to a control group without any sanction at all or a less restrictive sanction than boot camp. In the MDVE, some believe it is unfair that some suspects were arrested and received an official record whereas others were not arrested for the same type of behavior. In other cases, subjects in the experimental group may receive some benefit from the treatment that is essentially denied to the control group for a period of time and this can become an issue as well.

There are other important reasons why random assignment is difficult to accomplish. Random assignment may, for example, involve a disruption of the normal procedures of agencies and their officers. In the MDVE, officers had to adjust their normal and established routine, and this was a barrier at times in that study. Shadish, Cook, and Campbell also note that random assignment may not always be feasible or desirable when quick answers are needed.22 This is because experimental designs sometimes take a long time to produce results. In addition to the time required in planning and organizing the experiment, and treatment delivery, researchers may need several months if not years to collect and analyze the data before they have answers. This is particularly important because time is often of the essence in criminal justice research, especially in research efforts testing the effect of some policy or program where it is not feasible to wait years for answers. Waiting for the results of an experimental design means that many policy-makers may make decisions without the results.

Quasi-Experimental Designs

In general terms, quasi-experiments include a group of designs that lack random assignment. Quasi-experiments may also lack other parts, such as a pre-test or a control group, just like some experimental designs. The absence of random assignment, however, is the ingredient that transforms an otherwise experimental design into a quasi-experiment. Lacking random assignment is a major disadvantage because it increases the chances that the experimental and control groups differ on relevant factors before the treatment—both known and unknown—differences that may then emerge as alternative explanations of the outcomes.

Just like experimental designs, quasi-experimental designs can be organized in many different ways. This section will discuss three types of quasi-experiments: nonequivalent group design, one-group longitudinal design, and two-group longitudinal design.

Nonequivalent Group Design

The nonequivalent group design is perhaps the most common type of quasi-experiment.23 Notice that it is very similar to the classic experimental design with the exception that it lacks random assignment (see Table 5.4). Additionally, what was labeled the experimental group in an experimental design is sometimes called the treatment group in the nonequivalent group design. What was labeled the control group in the experimental design is sometimes called the comparison group in the nonequivalent group design. This terminological distinction is an indicator that the groups were not created through random assignment.

TABLE 5.4 | Nonequivalent Group Design

|

Treatment Group |

NR |

O1 |

X |

O2 |

|

Comparison Group |

NR |

O1 |

|

O2 |

|

|

|

Pre-Test |

|

Post-Test |

NR = Not Randomly assigned

One of the main problems with the nonequivalent group design is that it lacks random assignment, and without random assignment, there is a greater chance that the treatment and comparison groups may be different in some way that can impact study results. Take, for example, a nonequivalent group design where a researcher is interested in whether an aggression-reduction treatment program can reduce inmate-on-inmate assaults in a prison setting. Assume that the researcher asked for inmates who had previously been involved in assaultive activity to volunteer for the aggression-reduction program. Suppose the researcher placed the first 50 volunteers into the treatment group and the next 50 volunteers into the comparison group. Note that this method of assignment is not random but rather first come, first serve.

Because the study utilized volunteers and there was no random assignment, it is possible that the first 50 volunteers placed into the treatment group differed significantly from the last 50 volunteers who were placed in the comparison group. This can lead to alternative explanations for the results. For example, if the treatment group was much younger than the comparison group, the researcher may find at the end of the program that the treatment group still maintained a higher rate of infractions than the comparison group—even after the aggression-reduction program! The conclusion might be that the aggression program actually increased the level of violence among the treatment group. This conclusion would likely be spurious and may be due to the age differential between the treatment and comparison groups. Indeed, research has revealed that younger inmates are significantly more likely to engage in prison assaults than older inmates. The fact that the treatment group incurred more assaults than the comparison group after the aggression-reduction program may only relate to the age differential between the groups, not that the program had no effect or that it somehow may have increased aggression. The previous example highlights the importance of random assignment and the potential problems that can occur in its absence.

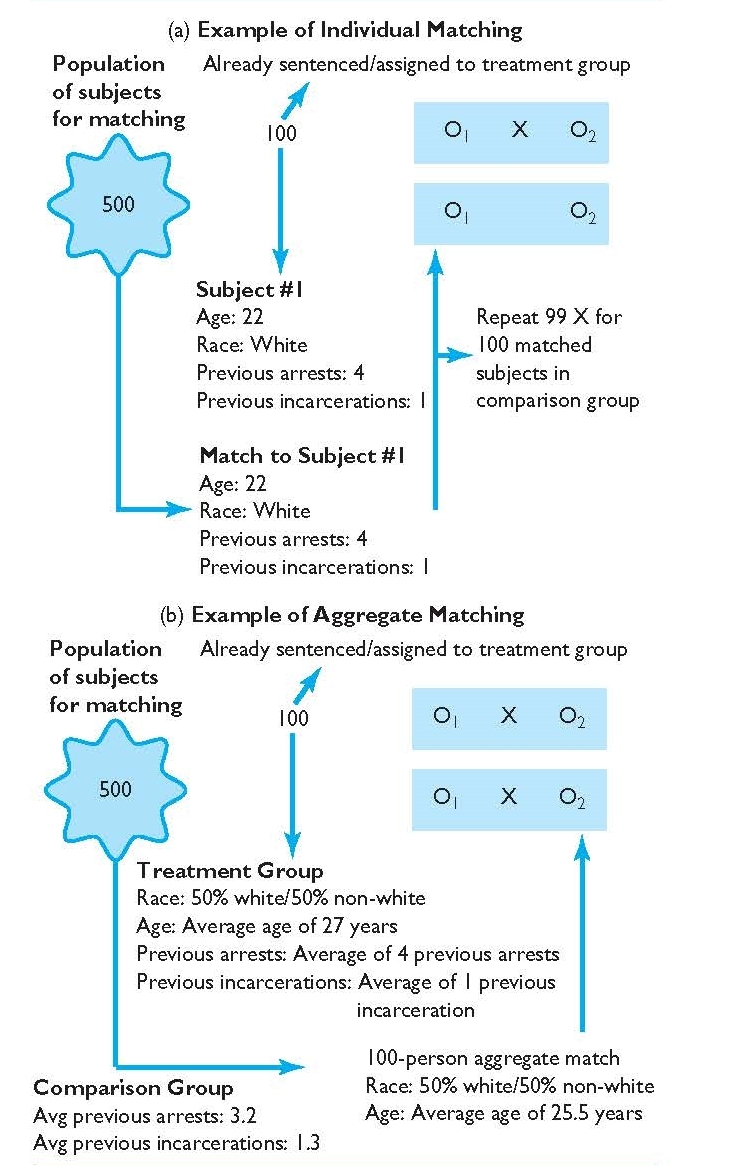

Although researchers who utilize a quasi-experimental design are not able to randomly assign their subjects to groups, they can employ other techniques in an attempt to make the groups as equivalent as possible on known or measured factors before the treatment is given. In the example above, it is likely that the researcher would have known the age of inmates, their prior assault record, and various other pieces of information (e.g., previous prison stays). Through a technique called matching, the researcher could make sure the treatment and comparison groups were “matched” on these important factors before administering the aggression reduction program to the treatment group. This type of matching can be done individual to individual (e.g., subject #1 in treatment group is matched to a selected subject #1 in comparison group on age, previous arrests, gender), or aggregately, such that the comparison group is similar to the treatment group overall (e.g., average ages between groups are similar, equal proportions of males and females). Knowledge of these and other important variables, for example, would allow the researcher to make sure that the treatment group did not have heavy concentrations of younger or more frequent or serious offenders than the comparison group—factors that are related to assaultive activity independent of the treatment program. In short, matching allows the researcher some control over who goes into the treatment and comparison groups so as to balance these groups on important factors absent random assignment. If unbalanced on one or more factors, these factors could emerge as alternative explanations of the results. Figure 5.3 demonstrates the logic of matching both at the individual and aggregate level in a quasi-experimental design.

Matching is an important part of the nonequivalent group design. By matching, the researcher can approximate equivalence between the groups on important variables that may influence the post-test. However, it is important to note that a researcher can only match subjects on factors that they have information about—a researcher cannot match the treatment and comparison group members on factors that are unmeasured or otherwise unknown but which may still impact outcomes. For example, if the researcher has no knowledge about the number of previous incarcerations, the researcher cannot match the treatment and comparison groups on this factor. Matching also requires that the information used for matching is valid and reliable, which is not always the case. Agency records, for example, are notorious for inconsistencies, errors, omissions, and for being dated, but are often utilized for matching purposes. Asking survey questions to generate information for matching (for example, how many times have you been incarcerated?) can also be problematic because some respondents may lie, forget, or exaggerate their behavior or experiences.

In addition to the above considerations, the more factors a researcher wishes to match the group members on, the more difficult it becomes to find appropriate matches. Matching on prior arrests or age is less complex than matching on several additional pieces of information. Finally, matching is never considered superior to random assignment when the goal is to construct equitable groups. This is because there is a much higher likelihood of equivalence with random assignment on factors that are both measured and unknown to the researcher. Thus, the results produced from a nonequivalent group design, even with matching, are at a greater risk of alternative explanations than an experimental design that features random assignment.

FIGURE 5.3 | (a) Individual Matching (b) Aggregate Matching

The previous discussion is not to suggest that the nonequivalent group design cannot be useful in answering important research questions. Rather, it is to suggest that the nonequivalent group design, and hence any quasi-experiment, is more susceptible to alternative explanations than the classic experimental design because of the absence of random assignment. As a result, a researcher must be prepared to rule out potential alternative explanations. Quasi-experimental designs that lack a pre-test or a comparison group are even less desirable than the nonequivalent group design and are subject to additional alternative explanations because of these missing parts. Although the quasi-experiment may be all that is available and still can serve as an important design in evaluating the impact of a particular treatment, it is not preferable to the classic experiment. Researchers (and consumers) must be attuned to the potential issues of this design so as to make informed conclusions about the results produced from such research studies.

RESEARCH IN THE NEWS

The Effects of Red Light Camera (RLC) Enforcement